A group of researchers from Stanford University and Princeton University has put together the largest RGB-D video dataset to date with over 1,500 scans of over 700 different locations across the world, for a total of 2.5 million views. This dataset, called ScanNet, has been semantically annotated for use in research projects. The purpose of this type of data, of course, is to teach our future robot overlords to both see and understand what they capture better. In the process, our computer-based spatial understanding will increase significantly. I can’t wait—bring it on.

Pattern Recognition

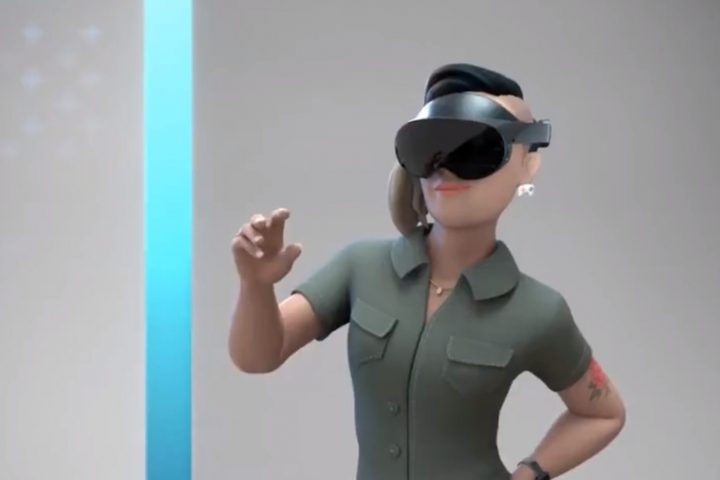

Source: Spatial Maps Can & Will Be So Much More for Mixed Reality